Systems Engineering and Electronics ›› 2022, Vol. 44 ›› Issue (11): 3486-3495.doi: 10.12305/j.issn.1001-506X.2022.11.24

• Guidance, Navigation and Control • Previous Articles Next Articles

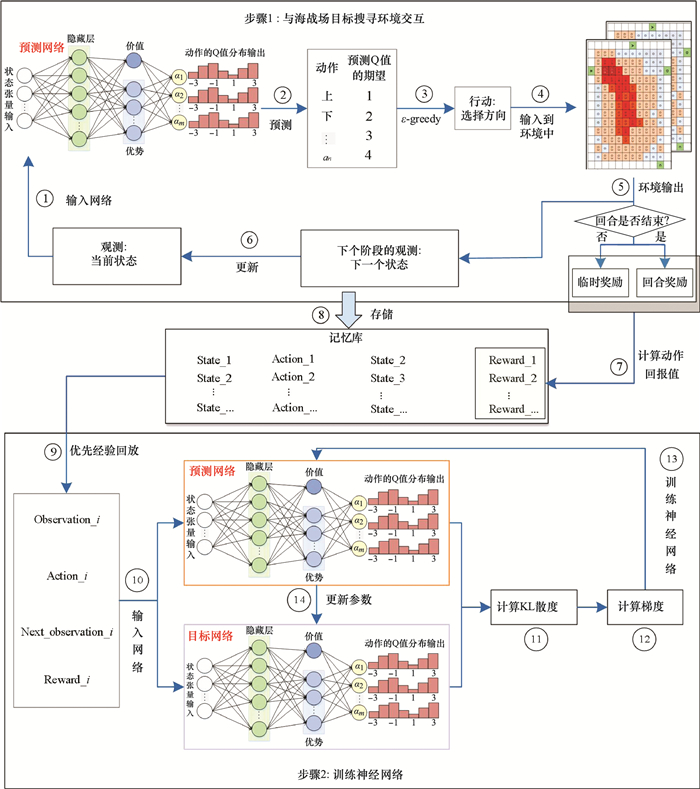

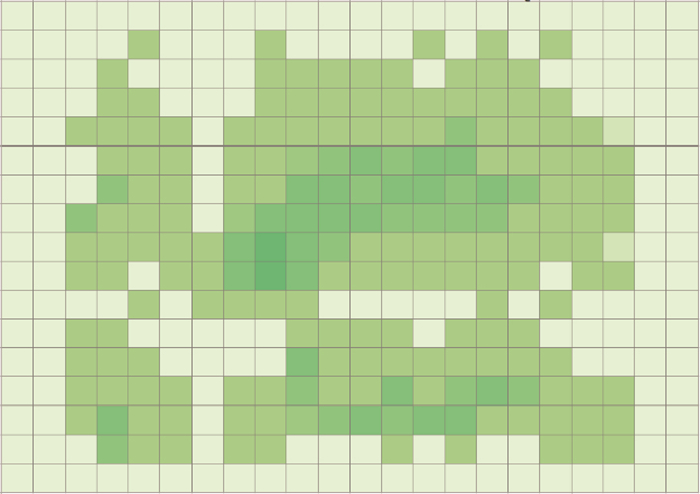

Target search path planning for naval battle field based on deep reinforcement learning

Qingqing YANG, Yingying GAO*, Yu GUO, Boyuan XIA, Kewei YANG

- College of Systems Engineering, National University of Defense Technology, Changsha 410073, China

-

Received:2021-09-01Online:2022-10-26Published:2022-10-29 -

Contact:Yingying GAO

CLC Number:

Cite this article

Qingqing YANG, Yingying GAO, Yu GUO, Boyuan XIA, Kewei YANG. Target search path planning for naval battle field based on deep reinforcement learning[J]. Systems Engineering and Electronics, 2022, 44(11): 3486-3495.

share this article

| 1 |

OTOTE D A , LI B , AI B , et al. A decision-making algorithm for maritime search and rescue plan[J]. Sustainability, 2019, 11 (7): 2084- 2099.

doi: 10.3390/su11072084 |

| 2 |

JIN Y Q , WANG N , SONG Y T , et al. Optimization model and algorithm to locate rescue bases and allocate rescue vessels in remote oceans[J]. Soft Computing, 2021, 25 (4): 3317- 3334.

doi: 10.1007/s00500-020-05378-6 |

| 3 |

GUO Y , YE Y Q , YANG Q Q , et al. A multi-objective INLP model of sustainable resource allocation for long-range maritime search and rescue[J]. Sustainability, 2019, 11 (3): 929- 953.

doi: 10.3390/su11030929 |

| 4 | RAHMES M, CHESTER D, HUNT J, et al. Optimizing cooperative cognitive search and rescue UAVs[C]//Proc. of the Autonomous Systems: Sensors, Vehicles, Security and the Internet of Everything, 2018. |

| 5 |

LIANG X Y , DU X S , WANG G L , et al. A deep reinforcement learning network for traffic light cycle control[J]. IEEE Trans.on Vehicular Technology, 2019, 68 (2): 1243- 1253.

doi: 10.1109/TVT.2018.2890726 |

| 6 |

WANG Y D , LIU H , ZHENG W B , et al. Multi-objective workflow scheduling with deep-Q-network-based multi-agent reinforcement learning[J]. IEEE Access, 2019, 7, 39974- 39982.

doi: 10.1109/ACCESS.2019.2902846 |

| 7 |

LUONG N C , HOANG D T , GONG S , et al. Applications of deep reinforcement learning in communications and networking: a survey[J]. IEEE Communications Surveys and Tutorials, 2019, 21 (4): 3133- 3174.

doi: 10.1109/COMST.2019.2916583 |

| 8 |

MNIH V , KAVUKCUOGLU K , SILVER D , et al. Human-level control through deep reinforcement learning[J]. Nature, 2015, 518 (7540): 529- 533.

doi: 10.1038/nature14236 |

| 9 | 史腾飞, 王莉, 黄子蓉. 序列多智能体强化学习算法[J]. 模式识别与人工智能, 2021, 34 (3): 206- 213. |

| SHI T F , WANG L , HUANG Z R . Sequence to sequence multi-agent reinforcement learning algorithm[J]. Pattern Recognition and Artificial Intelligence, 2021, 34 (3): 206- 213. | |

| 10 | MNIH V, KAVUKCUOGLU K, SILVER D, et al. Playing atari with deep reinforcement learning[EB/OL]. [2021-10-12]. https://arxiv.org/abs/1312.5602. |

| 11 | SCHAUL T, QUAN J, ANTONOGLOU I, et al. Prioritized experience replay[EB/OL]. [2021-10-12]. https://arxiv.org/abs/1511.05952. |

| 12 | VAN H H, GUEZ A, SILVER D. Deep reinforcement learning with double q-learning[C]//Proc. of the AAAI conference on Artificial Intelligence, 2016. |

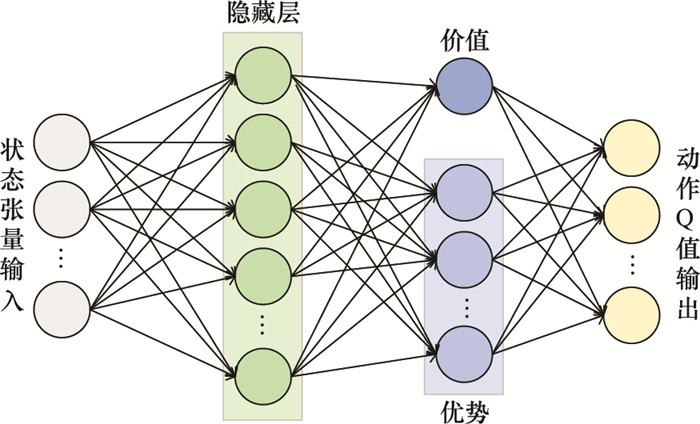

| 13 | WANG Z Y, SCHAUL T, HESSEL M, et al. Dueling network architectures for deep reinforcement learning[C]//Proc. of the International Conference on Machine Learning, 2016: 1995-2003. |

| 14 | BELLEMARE M G, DABNEY W, MUNOS R. A distributional perspective on reinforcement learning[C]//Proc. of the International Conference on Machine Learning, 2017: 449-458. |

| 15 | FORTUNATO M, AZAR M G, PIOT B, et al. Noisy networks for exploration[EB/OL]. [2021-10-12]. https://arxiv.org/abs/1706.10295. |

| 16 | HESSEL M, MODAYIL J, VAN H H, et al. Rainbow: combining improvements in deep reinforcement learning[C]//Proc. of the National Conference on Artificial Intelligence, 2018. |

| 17 | SUTTON R S , BARTO A G . Reinforcement learning: an introduction[M]. Cambridge: Massachusetts Institute of Technology press, 1998. |

| 18 | SUTTON R S . Learning to predict by the methods of temporal differences[J]. Machine learning, 1988, 3 (1): 9- 44. |

| 19 | HAUSKNECHT M, STONE P. Deep recurrent Q-learning for partially observable MDPs[EB/OL]. [2021-10-12]. https://arxiv.org/abs/1507.06527v4. |

| 20 |

轩永波, 黄长强, 吴文超, 等. 运动目标的多无人机编队覆盖搜索决策[J]. 系统工程与电子技术, 2013, 35 (3): 539- 544.

doi: 10.3969/j.issn.1001-506X.2013.03.15 |

|

XUN Y B , HUANG C Q , WU W C , et al. Coverage search strategies for moving targets using multiple unmanned aerial vehicle teams[J]. Systems Engineering and Electronics, 2013, 35 (3): 539- 544.

doi: 10.3969/j.issn.1001-506X.2013.03.15 |

|

| 21 | 高盈盈. 海上搜救中无人机搜寻规划方法及应用研究[D]. 长沙: 国防科技大学, 2020. |

| GAO Y Y. Research on UAV search planning method and application in maritime search and rescue[D]. Changsha: National University of Defense Technology, 2020. | |

| 22 |

XIONG W T , GELDER P V , YANG K W . A decision support method for design and operationalization of search and rescue in maritime emergency[J]. Ocean Engineering, 2020, 207, 107399- 107415.

doi: 10.1016/j.oceaneng.2020.107399 |

| 23 |

GALLEGO A J , PERTUSA A , GIL P , et al. Detection of bodies in maritime rescue operations using unmanned aerial vehicles with multispectral cameras[J]. Journal of Field Robotics, 2019, 36 (4): 782- 796.

doi: 10.1002/rob.21849 |

| 24 | 高春庆, 寇英信, 李战武, 等. 小型无人机协同覆盖侦察路径规划[J]. 系统工程与电子技术, 2019, 41 (6): 1294- 1299. |

| GAO C Q , KOU Y X , LI Z W . Cooperative coverage path planning for small UAVs[J]. Systems Engineering and Electronics, 2019, 41 (6): 1294- 1299. | |

| 25 |

YUE W , GUAN X H , WANG L Y . A novel searching method using reinforcement learning scheme for multi-UAVs in unknown environments[J]. Applied Sciences, 2019, 9 (22): 4964- 4978.

doi: 10.3390/app9224964 |

| 26 |

CHENG Y , ZHANG W D . Concise deep reinforcement learning obstacle avoidance for underactuated unmanned marine vessels[J]. Neurocomputing, 2018, 272, 63- 73.

doi: 10.1016/j.neucom.2017.06.066 |

| 27 |

LI R P , ZHAO Z F , SUN Q , et al. Deep reinforcement learning for resource management in network slicing[J]. IEEE Access, 2018, 6, 74429- 74441.

doi: 10.1109/ACCESS.2018.2881964 |

| 28 | TAMPUU A , MATⅡSEN T , KODELJA D , et al. Multiagent cooperation and competition with deep reinforcement learning[J]. Plos One, 2017, 12 (4): e0172395. |

| [1] | Haobo FENG, Qiao HU, Zhenyi ZHAO. AUV swarm path planning based on elite family genetic algorithm [J]. Systems Engineering and Electronics, 2022, 44(7): 2251-2262. |

| [2] | Guan WANG, Haizhong RU, Dali ZHANG, Guangcheng MA, Hongwei XIA. Design of intelligent control system for flexible hypersonic vehicle [J]. Systems Engineering and Electronics, 2022, 44(7): 2276-2285. |

| [3] | Lingyu MENG, Bingli GUO, Wen YANG, Xinwei ZHANG, Zuoqing ZHAO, Shanguo HUANG. Network routing optimization approach based on deep reinforcement learning [J]. Systems Engineering and Electronics, 2022, 44(7): 2311-2318. |

| [4] | Dou CHEN, Xiuyun MENG. UAV offline path planning based on self-adaptive coyote optimization algorithm [J]. Systems Engineering and Electronics, 2022, 44(2): 603-611. |

| [5] | Xiaomin ZHU, Daqian LIU, Bowen FEI, Tong MEN. Cooperative search method for multiple UAVs under local communication [J]. Systems Engineering and Electronics, 2022, 44(12): 3783-3791. |

| [6] | Yang YIN, Quanshun YANG, Zheng WANG, Yang LIU. USV cluster coverage search method with communication distance constraint [J]. Systems Engineering and Electronics, 2022, 44(12): 3821-3828. |

| [7] | Tong HAN, Andi TANG, Huan ZHOU, Dengwu XU, Lei XIE. Multiple UAV cooperative path planning based on LASSA method [J]. Systems Engineering and Electronics, 2022, 44(1): 233-241. |

| [8] | Weiqiang MA, Yongqi GAO, Miao ZHAO. Global-best difference-mutation brain storm optimization algorithm [J]. Systems Engineering and Electronics, 2022, 44(1): 270-278. |

| [9] | Jinming DU, Yunhua WU, Zhiming CHEN, bing HUA, Xinyi XU, yi ZHU, Chengfei YUE. Latent area prediction and search method for marine moving targets using game theory [J]. Systems Engineering and Electronics, 2021, 43(9): 2508-2515. |

| [10] | Lei LAI, Kun ZOU, Dewei WU, Baozhong LI. Multi-UAV cooperative path planning based on improved MOFA evolution of interactive strategy [J]. Systems Engineering and Electronics, 2021, 43(8): 2282-2289. |

| [11] | Zhiqiang JIAO, Jieyong ZHANG, Peiyang YAO, Xun WANG, Yichao HE. Distributed evolution method of C4ISR service deployment based on hierarchical structure [J]. Systems Engineering and Electronics, 2021, 43(6): 1572-1585. |

| [12] | Ang GAO, Zhiming DONG, Liang LI, Jinghua SONG, Li DUAN. Parallel priority experience replay mechanism of MADDPG algorithm [J]. Systems Engineering and Electronics, 2021, 43(2): 420-433. |

| [13] | Wen MA, Hui LI, Zhuang WANG, Zhiyong HUANG, Zhaoxin WU, Xiliang CHEN. Close air combat maneuver decision based on deep stochastic game [J]. Systems Engineering and Electronics, 2021, 43(2): 443-451. |

| [14] | Wenming WANG, Jialu DU. Agent path planning based on regular hexagon grid JPS algorithm [J]. Systems Engineering and Electronics, 2021, 43(12): 3635-3642. |

| [15] | Ang GAO, Qisheng GUO, Zhiming DONG, Shaoqing YANG. Research on efficiency evaluation method of multi unmanned ground vehicle system based on EAS+MADRL [J]. Systems Engineering and Electronics, 2021, 43(12): 3643-3651. |

| Viewed | ||||||

|

Full text |

|

|||||

|

Abstract |

|

|||||