| 1 |

JI Y, LIN D F, WANG W, et al Three-dimensional terminal angle constrained robust guidance law with autopilot lag consideration. Aerospace Science and Technology, 2019, 86, 160- 176.

doi: 10.1016/j.ast.2019.01.016

|

| 2 |

RYOO C K, CHO H, TAHK M J Time-to-go weighted optimal guidance with impact angle constraints. IEEE Trans. on Control Systems Technology, 2006, 14 (3): 483- 492.

doi: 10.1109/TCST.2006.872525

|

| 3 |

JEON I S, LEE J I, TAHK M J Impact-time-control guidance law for anti-ship missiles. IEEE Trans. on Control Systems Technology, 2006, 14 (2): 260- 266.

doi: 10.1109/TCST.2005.863655

|

| 4 |

DONG Y E, SHI M M, SUN Z W Satellite proximate interception vector guidance based on differential games. Chinese Journal of Aeronautics, 2018, 31 (6): 1352- 1361.

doi: 10.1016/j.cja.2018.03.012

|

| 5 |

MARCHIDAN A, BAKOLAS E Collision avoidance for an unmanned aerial vehicle in the presence of static and moving obstacles. Journal of Guidance, Control, and Dynamics, 2020, 43 (1): 96- 110.

doi: 10.2514/1.G004446

|

| 6 |

XU X G, WEI Z Y, REN Z, et al Time-varying fault-tolerant formation tracking based cooperative control and guidance for multiple cruise missile systems under actuator failures and directed topologies. Journal of Systems Engineering and Electronics, 2019, 30 (3): 587- 600.

doi: 10.21629/JSEE.2019.03.16

|

| 7 |

DARSHAN D, ARCHANA C, DEBAJYOTI M Artificial intelligence based missile guidance system. Proc. of the 7th International Conference on Signal Processing and Integrated Networks, 2020, 873- 878.

|

| 8 |

JIE Z, LI H D, BIN X A joint mid-course and terminal course cooperative guidance law for multi-missile salvo attack. Chinese Journal of Aeronautics, 2018, 31 (6): 1311- 1326.

doi: 10.1016/j.cja.2018.03.016

|

| 9 |

WANG P, ZHANG X B, ZHU J H Integrated missile guidance and control: a novel explicit reference governor using a disturbance observer. IEEE Trans. on Industrial Electronics, 2018, 66 (7): 5487- 5496.

|

| 10 |

FU S N, LIU X D, ZHANG W J, et al Multiconstraint adaptive three-dimensional guidance law using convex optimization. Journal of Systems Engineering and Electronics, 2020, 31 (4): 791- 803.

|

| 11 |

FANG M, GROEN F C A Collaborative multi-agent reinforcement learning based on experience propagation. Journal of Systems Engineering and Electronics, 2013, 24 (4): 683- 689.

doi: 10.1109/JSEE.2013.00079

|

| 12 |

SHALUMOV V Cooperative online guide-launch-guide policy in a target-missile-defender engagement using deep reinforcement learning. Aerospace Science and Technology, 2020, 104, 105996.

doi: 10.1016/j.ast.2020.105996

|

| 13 |

YOU S X, DIAO M, GAO L P, et al Target tracking strategy using deep deterministic policy gradient. Applied Soft Computing, 2020, 95, 106490.

doi: 10.1016/j.asoc.2020.106490

|

| 14 |

GAUDET B, LINARES R, FURFARO R Deep reinforcement learning for six degree-of-freedom planetary landing. Advances in Space Research, 2020, 65 (7): 1723- 1741.

doi: 10.1016/j.asr.2019.12.030

|

| 15 |

LI Y, QIU X H, LIU X D, et al Deep reinforcement learning and its application in autonomous fitting optimization for attack areas of UCAVs. Journal of Systems Engineering and Electronics, 2020, 31 (4): 734- 742.

doi: 10.23919/JSEE.2020.000048

|

| 16 |

YAN C, XIANG X J, WANG C Towards real-time path planning through deep reinforcement learning for a UAV in dynamic environments. Journal of Intelligent & Robotic Systems, 2020, 98 (2): 297- 309.

|

| 17 |

WANG D W, FAN T X, HAN T, et al A two-stage reinforcement learning approach for multi-UAV collision avoidance under imperfect sensing. IEEE Robotics and Automation Letters, 2020, 5 (2): 3098- 3105.

doi: 10.1109/LRA.2020.2974648

|

| 18 |

YUE W, GUAN X H, WANG L Y A novel searching method using reinforcement learning scheme for multi-UAVs in unknown environments. Applied Sciences, 2019, 9 (22): 4964.

doi: 10.3390/app9224964

|

| 19 |

LI G F, WU Y, XU P Adaptive fault-tolerant cooperative guidance law for simultaneous arrival. Aerospace Science and Technology, 2018, 82, 243- 251.

|

| 20 |

LI G F, WU Y, XU P Fixed-time cooperative guidance law with input delay for simultaneous arrival. International Journal of Control, 2021, 94 (6): 1664- 1673.

doi: 10.1080/00207179.2019.1662947

|

| 21 |

GAUDET B, LINARES R, FURFARO R Adaptive guidance and integrated navigation with reinforcement meta-learning. Acta Astronautica, 2020, 169, 180- 190.

doi: 10.1016/j.actaastro.2020.01.007

|

| 22 |

LIANG C, WANG W H, LIU Z H, et al Range-aware impact angle guidance law with deep reinforcement meta-learning. IEEE Access, 2020, 8, 152093- 152104.

doi: 10.1109/ACCESS.2020.3017480

|

| 23 |

HU Q L, HAN T, XIN M Sliding-mode impact time guidance law design for various target motions. Journal of Guidance, Control, and Dynamics, 2019, 42 (1): 136- 148.

doi: 10.2514/1.G003620

|

| 24 |

ZHANG W J, FU S N, LI W, et al An impact angle constraint integral sliding mode guidance law for maneuvering targets interception. Journal of Systems Engineering and Electronics, 2020, 31 (1): 168- 184.

|

| 25 |

LI G F, LI Q, WU Y J, et al Leader-following cooperative guidance law with specified impact time. Science China: Technological Sciences, 2020, 63 (11): 2349- 2356.

doi: 10.1007/s11431-020-1669-3

|

| 26 |

ZHANG W, SONG K, RONG X W, et al Coarse-to-fine UAV target tracking with deep reinforcement learning. IEEE Trans. on Automation Science and Engineering, 2018, 16 (4): 1522- 1530.

|

| 27 |

QIE H, SHI D X, SHEN T L, et al Joint optimization of multi-UAV target assignment and path planning based on multi-agent reinforcement learning. IEEE Access, 2019, 7, 146264- 146272.

doi: 10.1109/ACCESS.2019.2943253

|

| 28 |

HONG D, KIM M, PARK S Study on reinforcement learning-based missile guidance law. Applied Sciences, 2020, 10 (18): 6567.

doi: 10.3390/app10186567

|

| 29 |

CHENG L, LU H, LEI T, et al Path planning for anti-ship missile using tangent based dubins path. Proc. of the 2nd International Conference on Intelligent Autonomous Systems, 2019, 175- 180.

|

| 30 |

GUO H, FU W X, FU B, et al Smart homing guidance strategy with control saturation against a cooperative target-defender team. Journal of Systems Engineering and Electronics, 2019, 30 (2): 366- 383.

doi: 10.21629/JSEE.2019.02.15

|

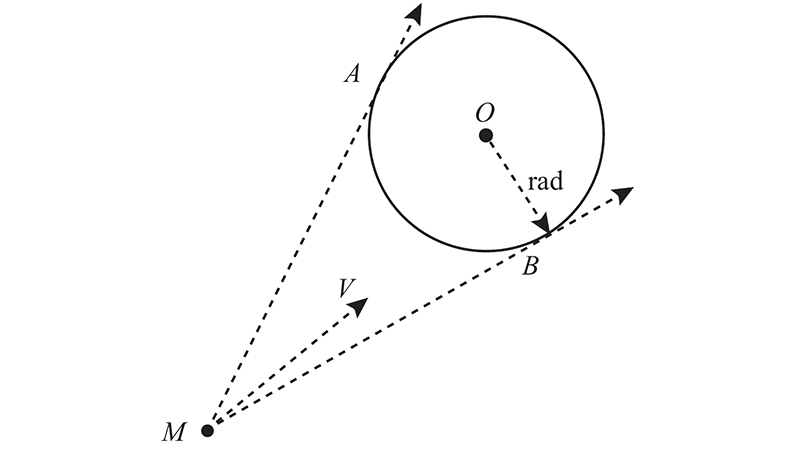

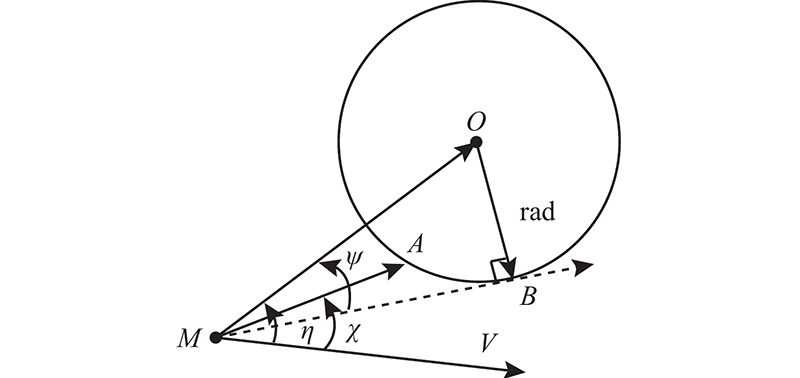

| 31 |

YU W B, CHEN W C Guidance law with circular no-fly zone constraint. Nonlinear Dynamics, 2014, 78 (3): 1953- 1971.

doi: 10.1007/s11071-014-1571-2

|

| 32 |

WEISS M, SHIMA T Linear quadratic optimal control-based missile guidance law with obstacle avoidance. IEEE Trans. on Aerospace and Electronic Systems, 2018, 55 (1): 205- 214.

|

| 33 |

FAN S P, QI Q, LU K F, et al Autonomous collision avoidance technique of cruise missiles based on modified artificial potential method. Transaction of Beijing Institute of Technology, 2018, 38 (8): 828- 834.

|

| 34 |

CHAYSRI P, BLEKAS K, VLACHOS K Multiple mini-robots navigation using a collaborative multiagent reinforcement learning framework. Advanced Robotics, 2020, 34 (13): 902- 916.

doi: 10.1080/01691864.2020.1757507

|

| 35 |

WANG C, WANG J, SHEN Y, et al Autonomous navigation of UAVs in large-scale complex environments: a deep reinforcement learning approach. IEEE Trans. on Vehicular Technology, 2019, 68 (3): 2124- 2136.

doi: 10.1109/TVT.2018.2890773

|

| 36 |

LI B H, WU Y Path planning for UAV ground target tracking via deep reinforcement learning. IEEE Access, 2020, 8, 29064- 29074.

doi: 10.1109/ACCESS.2020.2971780

|

), Yunjie WU1,2,3, Guofei LI4,*(

), Yunjie WU1,2,3, Guofei LI4,*( )

)